Authors: Sofie Aebert

Edited by: –

Last updated: October 8, 2025

Executive summary

Corporate Digital Responsibility (CDR) extends Corporate Social Responsibility into the digital domain by focusing on how organizations design, operate, and monitor data and technology to protect people and society. It emphasizes privacy-by-design, algorithmic accountability, transparency, and inclusion so that digital innovation strengthens—rather than erodes—trust.

CDR responds to the speed and scale of AI, data, and platforms. It addresses risks such as bias, opaque automation, manipulative design, security incidents, and digital exclusion. A managerial lens turns principles into practice through leadership mandates, risk-based policies, clear decision rights, lifecycle documentation, and human oversight where stakes are high.

Key application areas include AI and algorithmic ethics, data privacy and digital trust, and transparent stakeholder communication. Leaders set the tone and allocate resources; cross-functional teams translate intent into product, data, HR, procurement, and UX decisions. Proportional governance concentrates stronger controls on high-impact use cases (e.g., talent, credit, safety) and lighter checks on low-risk tools.

Implementation typically follows five phases: initiation (executive mandate, materiality, baseline), design (policies, standards, roles, and enablement), operationalization (embedding checkpoints into delivery workflows), evaluation (KPIs, audits, and stakeholder dialogue), and maturity (integration into strategy, culture, incentives). Tools such as model cards, datasheets for datasets, bias testing in CI, and DPIAs make responsibility auditable and repeatable.

Regulation and investor expectations are converging on lifecycle governance, exemplified by risk-based AI requirements and emerging management system standards. Organizations that institutionalize CDR can differentiate on trust, resilience, and innovation—especially when they align digital responsibility with sustainability objectives like efficient cloud use and device lifecycle management. Research gaps remain around SMEs, sector differences, long-term cultural effects, and links between CDR maturity and performance.

In short, CDR turns ethical intent into operational discipline. By pairing culture and governance with practical tools and continuous feedback, organizations can deliver trustworthy digital products and services while meeting stakeholder and regulatory expectations.

1 Introduction

1.1 Background and motivation

Digital transformation is one of the most significant and far-reaching developments shaping contemporary organizations. New technologies such as artificial intelligence (AI), algorithmic decision systems, big data analytics, and platform-based business models offer companies unprecedented opportunities for innovation, efficiency, and customer engagement.1Brynjolfsson, E. & McAfee, A. The Second Machine Age: Work, Progress, and Prosperity in a Time of Brilliant Technologies (Norton, 2014). Recent surveys indicate that more than two-thirds of European companies have already implemented digital tools at scale, illustrating the pace at which these technologies are becoming embedded in everyday business processes.2Eurostat. Digitalisation in Europe – 2025 edition (interactive publication; data year 2024) (Eurostat, 2025). At the same time, these technologies present substantial risks and raise normative questions that extend far beyond technical optimization.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). Concerns about data privacy, algorithmic bias, opaque AI systems, manipulative design choices, and digital exclusion have intensified public debates about the ethical responsibilities of companies in digital environments.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). These concerns are amplified by the growing role of digital technologies in shaping human behavior, market access, and social participation. Organizations therefore operate in an environment where digital actions can have wide-ranging societal consequences, even if such effects are unintended or indirectly caused by automated systems.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). Against this backdrop, digital responsibility has become a central concern for both scholars and practitioners. It refers not only to compliance with legal standards in the use of technology, but also to the active consideration of the ethical, cultural, and societal implications of digital innovation.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). While this demand resonates with earlier developments in Corporate Social Responsibility (CSR), it also marks a qualitative shift in scope, speed, and complexity. CSR frameworks were primarily designed to address social, environmental, and economic dimensions of corporate activity; they were not conceived to capture the unique challenges of data-driven and algorithmic processes that operate autonomously, invisibly, and at scale.5Carroll, A. B. Carroll’s pyramid of CSR: taking another look. Int. J. Corp. Soc. Responsib. 1, 3 (2016). This gap has become visible in public controversies ranging from the misuse of personal data to discriminatory recruitment algorithms and the opaque functioning of recommendation systems.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). As a result, the concept of Corporate Digital Responsibility (CDR) has gained increasing attention. CDR builds upon the normative foundations of CSR but extends them to include technological practices, governance structures, and digital ethics.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). In contrast to CSR, which often remains broad and multi-dimensional, CDR explicitly focuses on the ethical management of digital technologies and their impact on stakeholders.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). It is therefore not merely a subset of CSR, but an emergent domain that requires its own theoretical, ethical, and managerial framing. This thesis responds to this development by clarifying the conceptual foundations of CDR, highlighting its cultural and systemic underpinnings, and examining how organizations and their leadership can integrate digital responsibility into strategy, governance, and daily practice.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). To provide a neutral baseline for later analysis, the chapter closes by delineating why CDR’s lifecycle-oriented and socio-technical focus demands organizational mechanisms, not only principles.7Vakkuri, V. et al. Time for AI (Ethics) Maturity Model Is Now. arXiv 2101.12701 (2021).

1.2 Research focus and objective

Despite its growing importance, the concept of Corporate Digital Responsibility remains underdeveloped in both theory and practice. Existing studies frequently focus on individual aspects such as AI ethics, data governance, or digital sustainability, but rarely provide an integrated perspective that connects these strands into a coherent framework.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). As a consequence, the academic debate lacks conceptual clarity, and organizations face difficulties in translating abstract principles into managerial practice. In particular, small and medium-sized enterprises, long-term cultural impacts, and the integration of CDR into broader ESG strategies remain insufficiently explored.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). This thesis addresses these gaps through an integrative literature review combining academic and practitioner-oriented sources.8Snyder, H. Literature reviews as a research methodology: An overview and guidelines. J. Bus. Res. 104, 333–339 (2019). The contribution lies in synthesizing existing definitions and approaches, identifying recurring themes and tensions, and clarifying how CDR can be embedded into organizational structures and cultures.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). The perspective adopted is explicitly managerial: it focuses on leadership responsibilities, governance mechanisms, and cultural preconditions for digital responsibility, thereby offering both theoretical consolidation and practical orientation.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). In order to keep the argument transparent and reproducible, the review traces how definitions, mechanisms, and cultural anchors converge into a staged model that later guides implementation.7Vakkuri, V. et al. Time for AI (Ethics) Maturity Model Is Now. arXiv 2101.12701 (2021). The central research objective can therefore be formulated as follows: to identify, analyze, and synthesize existing literature on Corporate Digital Responsibility in order to clarify its conceptual foundations, cultural underpinnings, and practical implementation strategies from a managerial perspective. How CDR is defined and differentiated from CSR, what systemic and cultural factors influence its adoption, and what governance strategies and resources organizations can employ to successfully execute digital responsibility are some of the guiding questions. By addressing these questions, the thesis contributes to a more systematic understanding of CDR as a cross-cutting concern that bridges normative ethics, digital innovation, and management responsibility. It also sets the stage for evaluating whether commonly proposed maturity models and assurance mechanisms can be meaningfully operationalized in diverse organizational contexts.7Vakkuri, V. et al. Time for AI (Ethics) Maturity Model Is Now. arXiv 2101.12701 (2021).

1.3 Structure of the thesis

The structure of this thesis follows a logical progression from conceptual foundations to practical application. Chapter 2 outlines the methodological approach by explaining the choice of an integrative literature review, describing the process of data collection and selection, and clarifying how the analysis was conducted.8Snyder, H. Literature reviews as a research methodology: An overview and guidelines. J. Bus. Res. 104, 333–339 (2019). Chapter 3 provides the theoretical background, beginning with the historical development from Corporate Social Responsibility to Corporate Digital Responsibility, before addressing definitions, application fields, cultural and systemic perspectives, and existing maturity models. This theoretical discussion highlights research gaps and sets the stage for Chapter 4, which examines the practical implementation of CDR within organizations. In addition to recommendations, insights into best practices, and a perspective on upcoming challenges and regulatory trends, the discussion of managerial responsibilities, organizational drivers and barriers, and evaluation mechanisms is included. The overall composition thus leads the reader from the identification of the research problem, through methodological justification and conceptual clarification, to the exploration of concrete implications for corporate practice. To ensure coherence across chapters, key terms are used consistently in American English and aligned with the managerial lens adopted throughout.

2 Theoretical foundations

2.1 Historical development

Corporate social responsibility in its modern formulation has been an important and progressing topic since the 1950s. The modern era of CSR is most appropriately marked by the publication of Howard R. Bowen’s Social Responsibilities of the Businessman (1953).5Carroll, A. B. Carroll’s pyramid of CSR: taking another look. Int. J. Corp. Soc. Responsib. 1, 3 (2016). Bowen argued that large corporations held significant power in society and posed the fundamental question:what responsibilities to society may businessmen reasonably be expected to assume?5 This marks the beginning of CSR as a formalized field of academic and managerial debate.

Early critics quickly emerged. In The Dangers of Social Responsibility (1958), Theodore Levitt contended that business had only two tasks: honesty in dealings and the pursuit of profit.5Carroll, A. B. Carroll’s pyramid of CSR: taking another look. Int. J. Corp. Soc. Responsib. 1, 3 (2016). Similarly, Milton Friedman famously insisted in Capitalism and Freedom (1962) that social issues were not the concern of corporations but should be resolved through the free-market system 5 These positions highlight the enduring tension between profit-maximization and societal expectations.

Despite such resistance, the concept of CSR gained traction throughout the 1960s and 1970s, driven by social movements such as civil rights, consumer protection, and environmentalism. A milestone came with Archie B. Carroll’s four-part definition (1979), which described CSR as encompassing economic, legal, ethical, and discretionary (philanthropic) expectations that society has of organizations at a given point in time.5Carroll, A. B. Carroll’s pyramid of CSR: taking another look. Int. J. Corp. Soc. Responsib. 1, 3 (2016). Carroll later translated this model into the well-known CSR pyramid, where economic responsibility forms the base, followed by legal, ethical, and philanthropic responsibilities. Importantly, Carroll emphasized that ethics permeates all dimensions of the pyramid, and that organizations are expected to fulfill these responsibilities simultaneously rather than sequentially.5Carroll, A. B. Carroll’s pyramid of CSR: taking another look. Int. J. Corp. Soc. Responsib. 1, 3 (2016). Since the 1990s, CSR has been globalized and institutionalized, adapting to different cultural contexts and increasingly linked to sustainability and stakeholder management. Carroll concludes that the future of CSR seems to be on a sustainable and optimistic future, supported by drivers such as globalization, institutionalization, reconciliation with profitability, and academic proliferation.5Carroll, A. B. Carroll’s pyramid of CSR: taking another look. Int. J. Corp. Soc. Responsib. 1, 3 (2016). However, while CSR has provided an important foundation for integrating business and societal concerns, its frameworks were not designed to address the challenges of the digital age. Issues such as data protection, algorithmic transparency, artificial intelligence, and digital inclusion extend beyond the scope of traditional CSR.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Against this backdrop, the concept of Corporate Digital Responsibility has emerged, expanding the logic of CSR into digital ecosystems and providing a normative framework for responsible technological governance.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021).

2.2 Defining corporate digital responsibility

While Corporate Social Responsibility has long served as a framework for aligning corporate activities with societal expectations, its traditional approaches have increasingly shown limitations in the digital age.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). CSR emphasizes accountability, sustainability, and stakeholder orientation beyond pure profit maximization, yet it was not designed to address the speed, scale, and complexity of digital transformation. Digital technologies have introduced new power asymmetries, data-driven decision-making, algorithmic bias, and surveillance risks that go far beyond the conventional CSR focus.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). As Lobschat et al. (2020) argue, companies are now required to assume responsibility not only for their social and environmental footprint, but also for their digital infrastructures, practices, and impacts.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). This recognition has led to the emergence of Corporate Digital Responsibility. CDR builds on the normative foundations of CSR, but expands them to include issues such as data ethics, algorithmic transparency, responsible AI, and digital inclusion.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). In doing so, it redefines corporate responsibility in the context of rapidly evolving technological environments and positions digital accountability as a central concern of modern corporate governance.

CDR can be understood as the set of shared values and norms that guide how an organization designs, operates, reviews, and refines digital technologies and data in ways that benefit stakeholders.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). It encompasses a wide range of practices including data protection, algorithmic fairness, responsible AI, cybersecurity, and digital well-being.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). CDR is inherently multidimensional, covering technological, ethical, cultural, and managerial dimensions.

Unlike isolated concepts such as AI ethics or digital compliance, CDR represents an integrated framework that connects corporate values with digital governance structures.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Scholars such as Floridi et al. (2018) have laid the groundwork for digital ethics, particularly in the context of AI development.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). However, CDR goes beyond technical principles by embedding ethical reflection into organizational processes, leadership, and stakeholder dialogue.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). It addresses not only how technologies are designed and used, but also how responsibility is distributed, perceived, and institutionalized within firms.

In line with stakeholder theory, the firm’s purpose is framed as creating value for a broad set of stakeholders rather than only for shareholders.9Freeman, R. E., Phillips, R. & Sisodia, R. Tensions in stakeholder theory. Bus. Soc. 59(2), 213–231 (2020). CDR, in this logic, expands the field of stakeholder responsibility into the digital domain, where non-human actors (e.g., algorithms, platforms) increasingly shape stakeholder experiences and outcomes.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021).

2.3 Application fields of CDR

Corporate Digital Responsibility manifests itself not only as an abstract normative framework, but also in concrete application domains where digital technologies directly affect stakeholders. These application fields illustrate how CDR translates into managerial practice and highlight the areas where ethical tensions and governance challenges are most visible.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Among the most prominent domains are artificial intelligence and algorithmic ethics, data privacy and digital trust, as well as transparency and stakeholder communication.

The identification of these domains is supported by the existing literature, which consistently highlights artificial intelligence, data privacy, and transparency as the most pressing areas where CDR becomes tangible in practice.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). While these areas are not exhaustive, they represent the core contexts in which digital responsibility raises concrete ethical, managerial, and governance challenges.

2.3.1 Artificial intelligence and algorithmic ethics

Artificial Intelligence poses one of the most pressing challenges for Corporate Digital Responsibility. The increasing use of algorithms in decision-making, ranging from customer segmentation to hiring and pricing, raises complex ethical questions concerning bias, transparency, and accountability.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). A central concern is algorithmic bias, which can reinforce structural inequalities if training data reflect societal prejudices. CDR requires organizations to address these risks proactively.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). This includes conducting bias audits, involving diverse stakeholders in system design, and testing models for disparate impact. Transparency is a key enabler of trust: users and affected individuals must be able to understand how algorithmic decisions are made and contest them where appropriate.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). The Organization for Economic Co-operation and Development (OECD) guidance stresses that AI should be designed and deployed in ways that uphold human agency, avoid harm, and embed accountability mechanisms.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). Yet many organizations lack internal frameworks for translating such principles into practice.7Vakkuri, V. et al. Time for AI (Ethics) Maturity Model Is Now. arXiv 2101.12701 (2021). AI development is often outsourced or driven by performance goals rather than ethical safeguards.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). Without interdisciplinary collaboration and leadership oversight, ethical risks are likely to go unnoticed until they trigger reputational or legal damage.

A further issue is the lack of clear responsibility. When AI systems behave in unexpected ways, accountability is often diluted across departments or external vendors Floridi et al. (2018) argue that the core ethical issue in AI is human responsibility for its use, rather than the system’s autonomy.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). CDR thus demands not only ethical guidelines but also structures for decision traceability and intervention mechanisms.

From a governance perspective, some organizations have begun to establish AI ethics boards or appoint digital ethics officers.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). However, these measures are only effective if they are embedded in broader cultural and leadership structures. Deloitte notes that without transparent governance, organizations will struggle to build trust in AI, regardless of technical performance.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). Building such trust requires ongoing reflection, public engagement, and mechanisms for redress in case of harm.

In sum, ethical AI is not a technical feature but a managerial and cultural commitment.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). CDR in the context of AI calls for anticipatory thinking, stakeholder involvement, and a shift from technological determinism to responsible design.

2.3.2 Data privacy and digital trust

Data privacy is a central pillar of Corporate Digital Responsibility (CDR), as it directly affects how individuals experience autonomy, trust, and fairness in digital interactions.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). The increasing collection and use of personal data, whether for personalization, analytics, or automation, has heightened public awareness of privacy risks and expectations.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). CDR goes beyond compliance with data protection laws such as the General Data Protection Regulation (GDPR).6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). It includes normative considerations about what constitutes justifiable data use and how organizations can foster digital trust. The OECD links trust in digital systems to users’ perceived control over their personal data, supported by transparency, explainability, and meaningful consent.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). However, many companies still treat privacy as a legal obligation rather than a strategic or ethical concern.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). This reactive mindset can undermine public confidence, especially when data-driven innovations outpace internal governance. Deloitte reports that proactive and transparent privacy management is associated with stronger long-term trust and customer loyalty.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). A strong example of an integrated approach to data privacy and digital ethics can be found at SAP. The company has established a centralized data privacy governance model based on global privacy policies, a company-wide training program, and a dedicated privacy organization. Moreover, SAP has formed a Data Ethics Steering Committee that reviews data-driven technologies and ensures alignment with human rights and ethical values.12SAP SE. Integrated Report 2023 (SAP SE, 2024). SAP states that its privacy program aims to strengthen digital trust by promoting transparency, user control, and responsible innovation.12SAP SE. Integrated Report 2023 (SAP SE, 2024).

These efforts are embedded in SAP’s broader CDR strategy, which includes digital ethics guidelines, interdisciplinary review processes for AI applications, and public engagement through transparency reports.12SAP SE. Integrated Report 2023 (SAP SE, 2024). This shows how privacy governance can be both operational and value-based, anchored in leadership, culture, and ongoing dialogue.

Thus, digital trust is not merely the result of compliance mechanisms, but a product of responsible data practices, ethical design choices, and transparent communication.12SAP SE. Integrated Report 2023 (SAP SE, 2024). CDR in the domain of data privacy requires organizations to anticipate concerns, involve affected stakeholders, and institutionalize mechanisms for ethical oversight.

2.3.3 Transparency and stakeholder communication

Transparency plays a central role in Corporate Digital Responsibility (CDR), not only as a governance principle, but as a means of building and maintaining stakeholder trust.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Ethical digital transformation requires open communication about the technologies used, the rationale behind decisions, and their potential societal impact.

Transparent communication helps organizations align internal practices with external expectations.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). It enables customers, employees, partners, and the public to evaluate whether digital systems are fair, secure, and respectful of rights. According to the OECD, sustained stakeholder dialogue is essential to align digital innovation with societal expectations and values.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). This requires moving beyond technical disclosures or legal disclaimers. Ethical communication involves providing accessible explanations of algorithmic decisions, involving affected parties in the design of digital systems, and responding meaningfully to criticism.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Ethical leadership thus involves not only responsible choices, but the ability to communicate them clearly and consistently. Deloitte emphasizes that ethical leadership requires not only responsible choices but also clear explanations to those affected.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). Internally, transparency also enables reflection and ethical learning. Open dialogue about risks and value tensions allows organizations to detect problems early and foster a culture of shared responsibility.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). CDR therefore depends on both formal communication strategies and informal spaces for ethical discourse.

Some companies have begun to publish nonfinancial reports or ethical impact assessments as part of their CDR approach. However, these efforts are only credible if they are embedded in a broader process of stakeholder engagement and cultural accountability.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). Transparency is not an end in itself, but a means to foster digital legitimacy, resilience, and ethical alignment.

2.4 Theoretical anchoring: Cultural and systemic perspectives

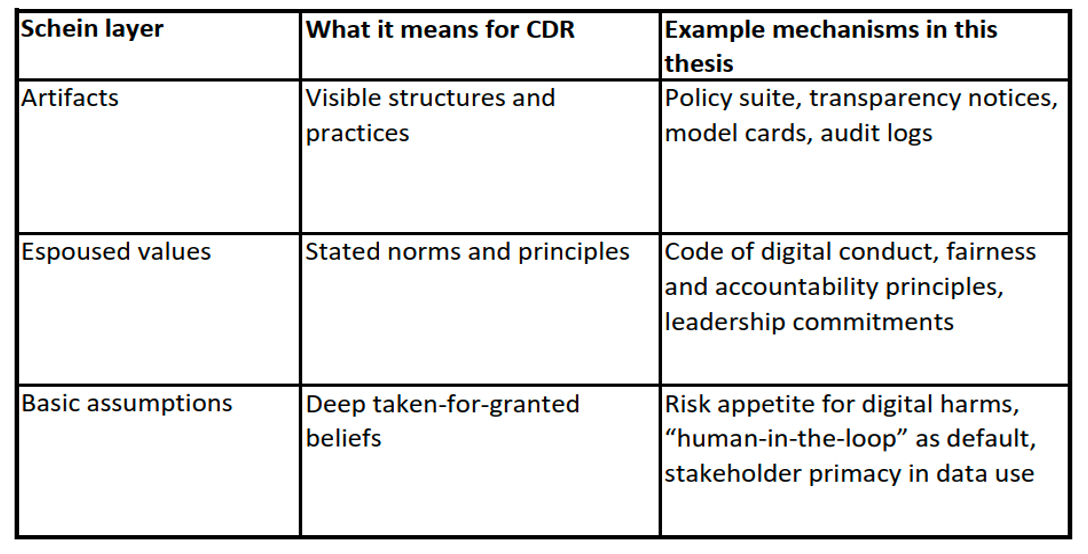

The theoretical anchoring of CDR is crucial because existing CSR frameworks mainly describe responsibilities, but they do not sufficiently explain how organizations internalize and reproduce responsibility.5Carroll, A. B. Carroll’s pyramid of CSR: taking another look. Int. J. Corp. Soc. Responsib. 1, 3 (2016). Cultural and systemic theories address this gap by focusing on values, norms, and communication structures that shape organizational behavior.13Schein, E. H. & Schein, P. A. Organizational Culture and Leadership, 5th edn (Wiley, 2016). CDR is not merely a set of technical standards but also a cultural construct. Organizational culture, defined as the shared values, norms, and assumptions that shape behavior, plays a critical role in enabling or constraining digital responsibility. According to Schein (2016), culture operates on multiple levels, from visible artifacts to deeply embedded mental models.13Schein, E. H. & Schein, P. A. Organizational Culture and Leadership, 5th edn (Wiley, 2016). In the context of CDR, culture influences how organizations interpret ethical dilemmas, react to regulatory uncertainty, and engage with digital innovation.13Schein, E. H. & Schein, P. A. Organizational Culture and Leadership, 5th edn (Wiley, 2016). Companies with strong ethical cultures are more likely to proactively address issues such as data privacy, algorithmic transparency, and digital fairness. Conversely, a culture of short-termism, secrecy, or technocratic dominance may hinder responsible digital transformation. In cultural terms, principles shape shared values and behavioral expectations. To become durable, these values must be translated into organizational mechanisms such as programs, roles and controls that embed CDR in everyday decision-making.14Luhmann, N. Organisation und Entscheidung, 3rd edn (Westdeutscher Verlag, 2000). To clarify how culture translates into mechanisms, we map Schein’s three layers (artifacts, espoused values, and basic assumptions) onto CDR (Table 2).13Schein, E. H. & Schein, P. A. Organizational Culture and Leadership, 5th edn (Wiley, 2016). Artifacts capture visible structures and practices (policies, documentation, logs); espoused values describe stated norms and leadership commitments; basic assumptions refer to deep, taken-for-granted beliefs that silently guide decisions (e.g., defaulting to human agency and harm minimization). This mapping foreshadows the governance artifacts and process gates operationalized in Chapter 4.

Schein’s three layers of organizational culture and their operationalization in CDR (illustrative examples).While cultural theory emphasizes shared values and assumptions as drivers of responsible behavior, systems theory shifts the perspective toward the structural reproduction of responsibility within organizational communication and decision-making.14Luhmann, N. Organisation und Entscheidung, 3rd edn (Westdeutscher Verlag, 2000). In this logic, responsibility does not emerge naturally, it must be structurally embedded in decision premises, such as programs, communication channels, and role definitions.14Luhmann, N. Organisation und Entscheidung, 3rd edn (Westdeutscher Verlag, 2000). Thus, CDR requires organizations to establish internal structures that continuously reproduce ethical expectations through their own operations. This highlights the need for formalization, transparency, and cultural reinforcement of digital values within the decision architecture of the firm. Accordingly, CDR must be reproduced via decision premises (policies, RACI, process gates and control sets) rather than intentions alone.14Luhmann, N. Organisation und Entscheidung, 3rd edn (Westdeutscher Verlag, 2000). Chapter 4 operationalizes this linkage by mapping principles to concrete governance artifacts and risk-class-specific controls.

2.5 Ethical implementation and maturity models

In practice, the ethical implementation of CDR requires more than abstract principles, it demands tools and processes that guide organizational behavior.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). One promising approach is the use of digital ethics or AI ethics maturity models. These models outline progressive stages by which organizations can develop capabilities for ethical awareness, governance, and integration.7Vakkuri, V. et al. Time for AI (Ethics) Maturity Model Is Now. arXiv 2101.12701 (2021). As Vakkuri et al. (2021) argue, such maturity models help organizations assess “how well digital ethics has been integrated into their decision-making, communication, and technical practices.”7 They propose a framework that includes dimensions such as governance structures, stakeholder inclusion, training, and technical tools. While initially developed for AI systems, these models provide valuable orientation for broader digital responsibility efforts, especially in complex or regulated industries.

2.6 Research gaps and underexplored contexts

Although the literature on Corporate Digital Responsibility has grown significantly in recent years, important gaps remain. Existing research is conceptually rich but empirically thin, with a disproportionate focus on large enterprises and digital frontrunners. Small and medium-sized enterprises (SMEs), which often lack the resources to establish comprehensive CDR frameworks, are underrepresented in current studies. Ibrahim and Seppälä (2024) emphasize that many AI ethics guidelines are too abstract or complex for SMEs, creating a persistent gap between aspiration and practical capability.15Ibrahim, S. & Seppälä, T. AI guidelines and ethical readiness inside SMEs: A review and recommendations. Discov. Digit. Soc. 3, 87 (2024). Moreover, sector-specific dynamics and cross-cultural differences in CDR adoption have received limited attention, leaving open questions about how responsibility practices vary across industries and institutional environments.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Another underexplored dimension concerns the long-term impact of CDR measures. There is a lack of longitudinal studies that evaluate whether current initiatives lead to sustainable cultural and structural change.8Snyder, H. Literature reviews as a research methodology: An overview and guidelines. J. Bus. Res. 104, 333–339 (2019). This absence of empirical evidence is particularly problematic in light of emerging technologies such as generative AI or algorithmic management systems, which introduce novel risks and responsibilities.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). Without data on how organizations adapt to such dynamics over time, it remains unclear whether existing frameworks are sufficient to ensure trust and accountability. Finally, the interplay between digital ethics and broader diversity, equity, and inclusion (DEI) agendas has only recently begun to receive scholarly attention, despite its potential relevance for both fairness and legitimacy in digital ecosystems.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025).

2.7 Strategic potential and future research directions

Despite these limitations, CDR holds significant strategic potential for organizations. It can serve as a differentiator in trust-driven markets, a foundation for digital sustainability, and a lever for cross-sectoral innovation.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Companies that institutionalize CDR through proactive leadership, interdisciplinary collaboration, and stakeholder dialogue are more likely to secure reputational and operational advantages.

Future research should therefore examine the causal relationship between CDR and organizational performance, including effects on reputation, innovation capacity, and financial outcomes. Particular attention is needed for the evaluation of ethical tools such as audits, maturity models, or key performance indicators, which remain under-assessed in empirical settings. In addition, the role of regulators, civil society, and consumers in shaping the adoption of CDR deserves closer investigation, especially as governance moves beyond voluntary codes of conduct towards binding legal frameworks.16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024). A promising research avenue lies in integrating CDR more systematically into Environmental, Social and Governance (ESG) strategies. As investors increasingly demand evidence of ethical digital behavior, CDR could evolve from a voluntary standard into a competitive requirement. Examining how CDR maturity correlates with ESG ratings, stakeholder engagement, or employee retention would not only strengthen theoretical understanding but also provide practical guidance for managers facing growing external expectations.10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). 11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). Taken together, the historical development, conceptual foundations, theoretical perspectives, and application fields of CDR illustrate both the progress of the academic debate and its remaining limitations. This synthesis provides the basis for the subsequent analysis of how CDR can be implemented in organizational practice, which will be addressed in Chapter 4.

3 Practical implementation of CDR in organizations

While the conceptual foundations of Corporate Digital Responsibility are increasingly well defined, the decisive challenge for organizations lies in the translation of abstract principles into practical routines and structures.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Implementation is not a single event but a staged process that requires a combination of cultural, strategic, and operational adjustments. To avoid symbolic responsibility or “ethics washing,” companies must establish a systematic implementation logic that guides CDR from its initial anchoring in leadership awareness to its eventual integration into everyday organizational practices.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). This chapter therefore adopts a process perspective on CDR implementation. Rather than analyzing isolated elements, the focus lies on the sequential phases that organizations typically undergo when embedding digital responsibility. First the initiation through awareness and strategic anchoring, then the design of frameworks and governance structures. The third point is the operational implementation in processes and practices followed by the evaluation and feedback to ensure effectiveness and credibility Last is the integration into governance and culture as part of a maturity trajectory.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Each phase is interdependent and iterative: awareness without governance remains vague, design without operationalization risks inertia, and implementation without evaluation loses credibility. Only through continuous feedback and cultural reinforcement can CDR mature into a sustainable organizational capability that contributes both to compliance and to long-term value creation.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

3.1 The role of organizational culture and shared values

The initiation phase establishes a shared understanding in the top management team that CDR is not an add-on compliance topic but a strategic governance challenge with cultural preconditions.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Executive sponsorship, a clear mandate, and visible “tone from the top” are essential to make digital responsibility actionable and to prevent symbolic ethics programs that never reach operational practice. Culture is the infrastructure for responsible digital behavior: what leaders systematically pay attention to, measure, and reward will shape everyday decisions around data, AI, and design, and thereby set the conditions for implementation at scale.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). This thesis operationalizes the initiation phase as a managerial package consisting of an executive mandate, a short materiality exercise, and a baseline assessment of policies, skills, and controls.

A pragmatic entry point is a high-level CDR mandate linked to risk management, digital strategy, and sustainability objectives. This mandate should be informed by a short materiality exercise that prioritizes digital issues by impact and likelihood (e.g., algorithmic bias in HR, privacy in customer analytics, dark-pattern risks in UX), plus a baseline assessment of current policies, skills, and controls.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). To avoid later trade-offs between delivery speed and responsibility, we propose linking the CDR mandate to enterprise risk management and portfolio steering so that risk appetite and resources are explicit from the outset.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Anchoring CDR at this early stage in enterprise risk management (ERM) and portfolio steering helps avoid later trade-offs between delivery speed and responsibility by making risk appetite explicit and resourcing proportionate to risk.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). The external environment reinforces the need for such an initiation. Evidence shows that AI exposure and adoption vary strongly across sectors and countries; functions like finance and Information and Communication Technology (ICT)carry higher exposure and, consequently, higher governance needs.17Filippucci, F., Gal, P., Laengle, K. & Schief, M. Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies. OECD Artificial Intelligence Papers No. 41 (OECD Publishing, 2025). Organizations that recognize this heterogeneity early can focus their initial CDR efforts where risk and impact concentrate (e.g., high-stakes decision systems), instead of attempting blanket policies that generate overhead without risk reduction.17Filippucci, F., Gal, P., Laengle, K. & Schief, M. Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies. OECD Artificial Intelligence Papers No. 41 (OECD Publishing, 2025). Finally, initiation should include a concise statement of principles, purpose, scope, and norms, that references recognized external frames to create legitimacy and direction (e.g., OECD AI principles; internal alignment with existing CSR/ESG commitments).10OECD. AI and the Future of Social Protection in OECD Countries (OECD Publishing, 2025). The principle statement should align with recognized external frameworks, most prominently the OECD AI Principles adopted in 2019 and with the firm’s CSR/ESG commitments.18OECD. Recommendation of the Council on Artificial Intelligence (OECD AI Principles), OECD/LEGAL/0449 (2019). The key is to pair this principled intent with resourcing, milestones, and an owner; otherwise, firms risk what Dörr calls “ethics theatre”, i.e., visible but ineffectual initiatives.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Gate deliverables of the initiation phase include an executive mandate and scope, a materiality map and short baseline report and a CDR principle statement for internal consultation.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). 17Filippucci, F., Gal, P., Laengle, K. & Schief, M. Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies. OECD Artificial Intelligence Papers No. 41 (OECD Publishing, 2025). Given the heterogeneous exposure to AI across sectors and functions, organizations should prioritize the 3–5 highest-stakes use cases for early governance focus instead of adopting blanket policies.17Filippucci, F., Gal, P., Laengle, K. & Schief, M. Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies. OECD Artificial Intelligence Papers No. 41 (OECD Publishing, 2025).

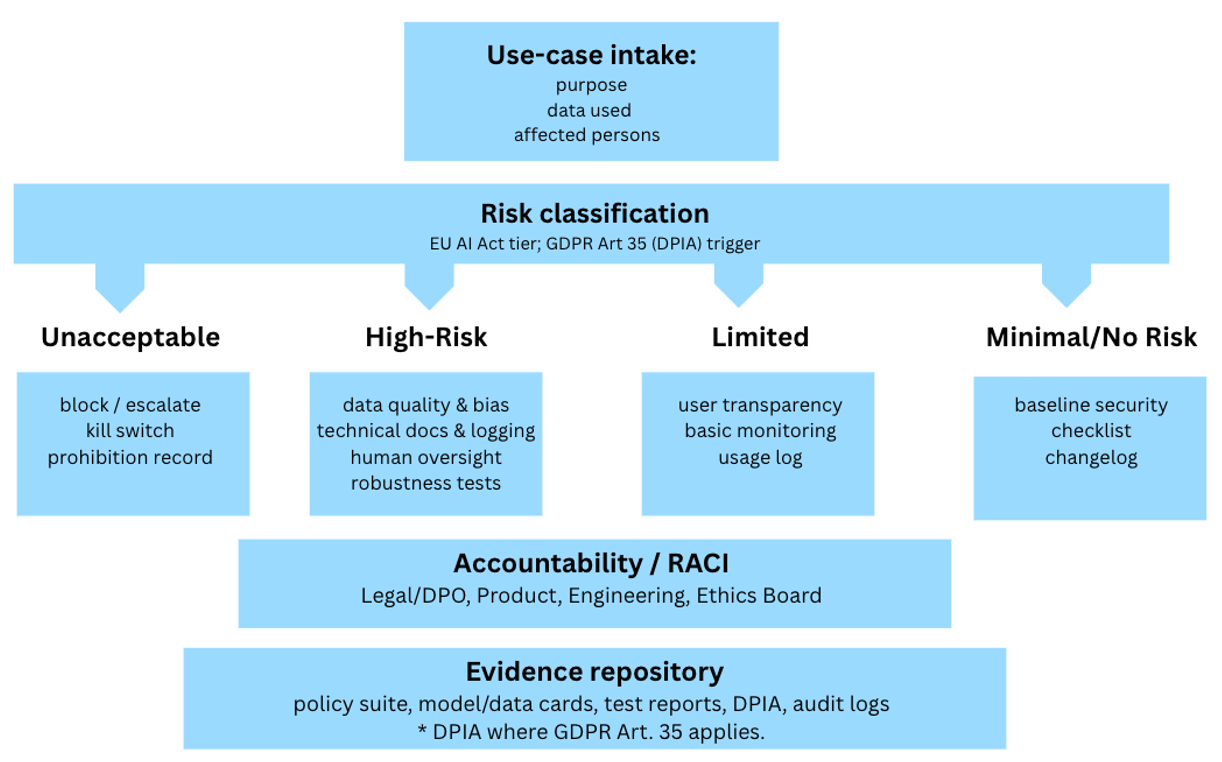

3.2 Managerial roles and responsibilities in CDR

CDR is increasingly recognized as a leadership issue. Managers are responsible for shaping the strategic direction, resource allocation, and internal structures that enable or inhibit digital responsibility.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). This includes integrating ethical criteria into innovation processes, defining accountability structures for data and AI systems, and fostering transparency in stakeholder communication.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). 6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Moreover, CDR is becoming relevant for risk management, corporate governance, and reputation building.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). As regulatory frameworks such as the EU AI Act gain traction, companies will be required to demonstrate proactive digital compliance and ethical foresight.16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024). In this context, CDR can be seen as a strategic resource that enhances trust, resilience, and long-term value creation.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). It challenges managers to go beyond legal minimalism and to develop an organizational mindset that treats digital responsibility as a core element of corporate leadership.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). In the design phase, intent is translated into governance and policies that can travel into daily work. A clear RACI (who is Responsible, Accountable, Consulted, Informed) across the board/exec level, a named CDR lead, specialist roles (e.g., Data Protection Officer, Head of Data/AI, Model Risk Officer), and a standing escalation body (e.g., a Data & AI Ethics Board) are foundational.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Defining decision rights up front reduces ambiguity and accelerates responsible delivery because product, engineering, and compliance know when to self-certify and when to escalate.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). The policy architecture should be layered (policy to standards to procedures).6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). At a minimum, firms should put five basics in place: a concise CDR policy that sets principles and risk appetite; a clear AI and algorithm standard that defines risk classes, fairness/robustness/explainability, when human review is required, and the documentation to keep; a data and privacy standard that covers minimization, consent, retention and the triggers for data-protection impact assessments; a digital design standard that bans dark patterns and requires accessible, inclusive design; and practical guidance on sustainable digital operations, including efficient cloud use, lifecycle management and e-waste reduction. This layered structure enables proportionality: higher-risk systems carry stronger controls, while low-risk tools face light-touch requirements.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). 16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024). 19Mitchell, M. et al. Model cards for model reporting. In Proc. Conf. on Fairness, Accountability, and Transparency, 220–229 (ACM, 2019). 20European Union. Regulation (EU) 2016/679 (General Data Protection Regulation). Off. J. Eur. Union L 119, 1–88 (2016). A risk-based approach is particularly important because AI adoption is uneven and concentrated in specific sectors and functions.17Filippucci, F., Gal, P., Laengle, K. & Schief, M. Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies. OECD Artificial Intelligence Papers No. 41 (OECD Publishing, 2025). Using a simple risk taxonomy that ties control sets to use-case criticality (e.g., talent decisions, credit scoring, safety-relevant operations) aligns governance effort with exposure and recognizes that high-intensity adoption requires stronger guardrails and assurance. This also prepares organizations for evolving regulatory regimes that distinguish risk classes and prescribe differentiated obligations.16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024).

Operationalizing CDR by risk class Design must also include a capability blueprint and enablement plan.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). Beyond policies, firms need tools (e.g., bias-testing suites, model registries, automated audit trails), role-based upskilling (engineers on robustness and bias testing; product on ethical discovery; legal/compliance on AI risk), and communities of practice or “champions” that diffuse patterns and accelerate adoption of responsible-by-design methods.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). 21National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0) – Playbook (NIST, 2023). Embedding these capabilities into leadership, workforce, and work-architecture initiatives is more effective than standalone training because it links learning to real workflows and incentives.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020).

Gate deliverables of the design phase include an approved RACI and Ethics Board charter, a published policy suite with mapped control sets by risk class and a capability roadmap with training milestones and the required tooling to make compliance the easy path in delivery.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). 11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). 16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024). These deliverables operationalize the strategic intent from initiation and create the preconditions for systematic, auditable implementation in the next phase.

In practice, CDR tools must be embedded into specific phases of the organizational workflow. Model cards, for example, are completed during the testing phase of an AI system to document training data, evaluation results, and intended use, ensuring traceability before deployment.19Mitchell, M. et al. Model cards for model reporting. In Proc. Conf. on Fairness, Accountability, and Transparency, 220–229 (ACM, 2019). Datasheets for datasets are typically created at the point of data intake and updated whenever substantial changes to the dataset occur, thereby providing continuous transparency.22Gebru, T. et al. Datasheets for datasets. Commun. ACM 64(12), 86–92 (2021). Bias-testing suites are most effective when integrated into the continuous integration (CI) pipeline, where they can automatically flag discriminatory patterns during model updates.21National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0) – Playbook (NIST, 2023). Similarly, Data Protection Impact Assessments (DPIAs) should be initiated at the discovery stage of new projects and reviewed by compliance before implementation.20European Union. Regulation (EU) 2016/679 (General Data Protection Regulation). Off. J. Eur. Union L 119, 1–88 (2016). These contextualized applications ensure that tools are not merely symbolic artifacts but actively shape decision-making and accountability.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Microsoft offers a documented example of institutionalizing managerial roles and governance for Responsible AI.23Microsoft. Responsible AI Standard (v2) (Microsoft Corporation, 2022; updates 2023–2025). The design mirrors this thesis’s model: executive principles (espoused values), formal roles and review bodies (mechanisms), and auditable artifacts (documentation and logs) across the lifecycle. In recent years, Microsoft established an Office of Responsible AI and a company-wide Responsible AI Standard, supported by interdisciplinary review committees that evaluate the ethical implications of new technologies prior to release.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021).23Microsoft. Responsible AI Standard (v2) (Microsoft Corporation, 2022; updates 2023–2025). Responsibility is shared across managerial levels: executives define the ethical principles; specialised committees oversee development and enforce accountability; and product teams document and justify their design choices in line with the company’s AI principles of fairness, reliability, safety, privacy, inclusiveness, transparency and accountability.23Microsoft. Responsible AI Standard (v2) (Microsoft Corporation, 2022; updates 2023–2025). This governance model illustrates how CDR can move beyond abstract commitments and become a structured management practice. It also demonstrates that digital responsibility requires clear role allocation, interdisciplinary collaboration, and visible leadership support, all of which are central to embedding CDR into organizational routines.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

3.3 Drivers and barriers of implementation

The implementation of Corporate Digital Responsibility is not only hindered by obstacles but also driven by powerful incentives and enabling conditions.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). One of the most significant drivers is the increasing regulatory pressure on companies to address digital risks proactively.16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024). Initiatives such as the European Union’s General Data Protection Regulation (GDPR) or the upcoming AI Act establish binding rules for transparency, fairness, and accountability in the digital domain.20European Union. Regulation (EU) 2016/679 (General Data Protection Regulation). Off. J. Eur. Union L 119, 1–88 (2016). Organizations are therefore motivated to integrate CDR not merely as a voluntary commitment but as a means to secure compliance and reduce the risk of legal sanctions.16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024). In addition to regulation, societal expectations act as a strong external driver.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Public debates about data misuse, algorithmic bias, and digital exclusion have heightened stakeholder awareness, and companies are increasingly evaluated by customers, investors, and employees on their handling of digital responsibility.3Floridi, L. et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 28, 689–707 (2018). 4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Aligning with these expectations offers reputational benefits and can strengthen legitimacy in the market.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020).

Another important driver is the recognition of strategic opportunities associated with CDR. By embedding ethical and transparent digital practices, companies can differentiate themselves from competitors, build trust with stakeholders, and foster long-term relationships with customers. Trust is particularly crucial in digital markets, where many products and services are intangible and depend on the willingness of users to share personal data or rely on algorithmic decision-making.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Firms that actively demonstrate responsibility are more likely to attract and retain customers in the long run.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). Moreover, CDR can act as a driver of innovation by encouraging organizations to design products and processes that combine technological efficiency with ethical acceptability.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). This dual orientation enables companies to anticipate societal concerns early on and to transform them into new forms of value creation.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021).

Internal organizational factors also play a role as drivers. Leadership commitment is frequently cited as a decisive precondition for successful implementation. When top management clearly signals the importance of digital responsibility and allocates resources accordingly, CDR becomes anchored in strategy rather than remaining a peripheral initiative.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Likewise, corporate culture can act as a driver when values such as transparency, fairness, and accountability are already embedded in everyday practices.13Schein, E. H. & Schein, P. A. Organizational Culture and Leadership, 5th edn (Wiley, 2016). A supportive culture facilitates the translation of abstract ethical principles into concrete decisions at the operational level. Finally, cross-functional collaboration and the involvement of diverse expertise, ranging from legal compliance and IT security to ethics and sustainability, help to create an environment in which CDR is not viewed as a burden but as a shared responsibility across departments.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

Taken together, these drivers illustrate that the success of CDR depends on both external forces, such as regulation and societal expectations, and internal enablers, such as leadership commitment and cultural alignment.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). They provide the necessary momentum for companies to move beyond symbolic responsibility and to integrate digital ethics into their strategic and operational routines.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). 6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

The implementation phase operationalizes the principles and governance structures defined earlier by embedding CDR into concrete organizational processes. This step is crucial, since without integration into product development, data governance, human resource practices, and procurement, CDR risks remaining a symbolic exercise.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Lobschat et al. (2020) highlight that CDR can only generate value if it is translated into tangible management activities that cut across functions, rather than remaining a high-level statement.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021).

A first area of implementation concerns the product and service lifecycle, where responsibility must be embedded “by design”.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). This involves integrating ethical reflection and risk assessment already in the discovery and requirements phases of digital initiatives, ensuring that principles such as privacy, fairness, transparency, and sustainability are considered alongside functional and business objectives. A practical example is the use of standardized documentation such as model cards and datasheets for datasets to ensure traceability and accountability in AI Systems.19Mitchell, M. et al. Model cards for model reporting. In Proc. Conf. on Fairness, Accountability, and Transparency, 220–229 (ACM, 2019). 22Gebru, T. et al. Datasheets for datasets. Commun. ACM 64(12), 86–92 (2021).

Second, data governance and privacy practices play a central role. Firms must ensure data minimization, explicit consent management, retention control, and purpose limitation, while also embedding privacy and data ethics requirements into vendor contracts and system procurement.20European Union. Regulation (EU) 2016/679 (General Data Protection Regulation). Off. J. Eur. Union L 119, 1–88 (2016). As the OECD stresses, digital productivity gains can only be achieved sustainably if firms systematically address the risks of algorithmic bias, data misuse, and unequal access.17Filippucci, F., Gal, P., Laengle, K. & Schief, M. Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies. OECD Artificial Intelligence Papers No. 41 (OECD Publishing, 2025).

Third, human resources and workforce practices are key for implementation. Deloitte’s analysis shows that employees are increasingly embedded in “superteams,” where human expertise and AI complement each other. Making such teams effective requires not only technological infrastructure, but also cultural enablement and role-specific training in ethical digital practices.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). In practice, this means equipping engineers with tools for bias testing, product managers with methods for ethical discovery, and leaders with oversight skills that include digital responsibility.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

Fourth, implementation extends to procurement and third-party management. Many critical AI and data-driven functions are sourced externally, which requires organizations to define binding requirements for their partners. This can be achieved through supplier clauses on algorithmic governance, audit rights, and transparency obligations.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Dörr (2020) emphasizes that without extending responsibility requirements to external partners, organizations risk creating “responsibility gaps” where ethical risks are effectively outsourced without oversight.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

Finally, implementation must touch marketing and user interaction practices. Transparency in personalization, bans on dark patterns, and commitments to accessible and inclusive design strengthen user trust and differentiate responsible companies in competitive markets. As Lobschat et al. (2021) underline, CDR is not merely defensive risk management, but also a driver of trust-based relationships with customers and other stakeholders, which in turn can enhance brand reputation and long-term value creation.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021).

Deliverables of this phase include the integration of CDR checkpoints into existing processes such as the system development lifecycle (SDLC), the provision of technical tools like bias testing suites or automated DPIA workflows, training curricula with clear completion targets, and supplier playbooks to align external partners with the company’s responsibility standards.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). By systematically embedding these practices, organizations ensure that CDR is not peripheral but part of their everyday operations, linking culture, governance, and technology into an actionable whole.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

Different governance and implementation approaches for CDR come with specific advantages and disadvantages. A centralized ethics board offers consistency and faster escalation paths, but risks becoming a bottleneck and being too detached from operational practice. In contrast, a federated model with ethics champions in each business unit provides domain expertise and scalability, yet often requires stronger coordination mechanisms to avoid fragmentation. Similarly, pre-release audits of AI systems are highly preventive and reputationally protective but may slow down time-to-market. Post-release monitoring, by contrast, generates real-world evidence and allows for agile learning, but exposes organizations to higher risks if critical flaws remain undetected in early stages. Automated bias checks enable scalability and objectivity, whereas manual reviews allow for greater contextual sensitivity but demand significant resources and may suffer from inconsistency.21National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0) – Playbook (NIST, 2023). These trade-offs illustrate that effective CDR implementation depends not on one-size-fits-all solutions, but on proportional and context-sensitive choices.21National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0) – Playbook (NIST, 2023).

The experience of Deutsche Telekom illustrates both the opportunities and challenges of embedding CDR at scale.24Deutsche Telekom. Corporate Digital Responsibility (company website; accessed 2025). As one of the first companies in Germany to publish an explicit Corporate Digital Responsibility strategy, Telekom defined four central action areas: data privacy and security, digital participation, sustainability in digitalization, and media competence. These pillars reflect the recognition that CDR cannot be confined to technical compliance but must address wider societal expectations. The company faced several challenges in translating these principles into practice. On the one hand, existing CSR structures had to be adapted to accommodate digital topics, which required new forms of cross-functional cooperation between IT, legal, compliance, and sustainability departments. On the other hand, the organization had to balance commercial objectives with the societal expectation to provide transparent and accessible digital services.24Deutsche Telekom. Corporate Digital Responsibility (company website; accessed 2025). To overcome these barriers, Telekom introduced specific initiatives such as transparent reporting on data protection, projects to enhance digital inclusion (e.g., promoting access for disadvantaged groups), and programs to improve employee awareness of digital ethics.25Deutsche Telekom. Corporate Responsibility Report 2024 (Deutsche Telekom AG, 2025). The case shows that CDR implementation can encounter significant resistance when existing structures are not designed for digital risks, but that these barriers can be addressed through explicit strategies, communication efforts, and the integration of CDR goals into broader corporate governance frameworks.24Deutsche Telekom. Corporate Digital Responsibility (company website; accessed 2025).

3.4 Evaluation phase: Monitoring and feedback

The evaluation phase ensures that CDR does not remain static but becomes a learning process supported by measurable outcomes.21National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0) – Playbook (NIST, 2023). Monitoring and feedback loops allow organizations to assess whether principles and practices defined in earlier phases translate into effective behavior and performance.21National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0) – Playbook (NIST, 2023). Without systematic evaluation, CDR initiatives risk degenerating into symbolic compliance that cannot be verified.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). Lobschat et al. (2021) stress that organizations must treat digital responsibility as a dynamic capability, continuously adapting practices in response to technological advances and stakeholder expectations.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021).

A first element of evaluation is the definition of measurable indicators (KPIs).4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). Typical CDR-related metrics include the percentage of AI systems subjected to bias testing, the number of completed privacy impact assessments, employee training coverage in digital ethics, and stakeholder trust scores.21National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0) – Playbook (NIST, 2023). The organizational psychology literature confirms that such quantitative indicators are indispensable for sustaining digital transformation, because they help create accountability and enable benchmarking across units.26Antonakis, J. & Day, D. V. Leadership: Past, present, and future. Annu. Rev. Organ. Psychol. Organ. Behav. 8, 197–236 (2021).29. ISO/IEC 42001:2024. Artificial intelligence management system — Requirements (International Organization for Standardization, 2024).

Second, organizations need structured review mechanisms.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020). These can take the form of regular meetings of ethics boards, independent audits, or stakeholder panels. Dörr (2021) highlights that formalized feedback processes are crucial to avoid “ethics washing”: only when governance bodies are mandated to review evidence and recommend adjustments can responsibility initiatives maintain credibility.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).

Third, regulatory alignment and external standards are key.16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024). The OECD points out that AI adoption and productivity effects differ across sectors and countries, which implies that firms must adjust their evaluation practices to the evolving regulatory landscape.17Filippucci, F., Gal, P., Laengle, K. & Schief, M. Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies. OECD Artificial Intelligence Papers No. 41 (OECD Publishing, 2025). For example, the EU AI Act introduces risk-based obligations that require firms to classify and document AI use cases in detail, subject them to conformity assessments, and retain auditable records.16European Union. Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (AI Act). Off. J. Eur. Union L 1689, 1– (2024).

Fourth, feedback loops must be continuous and adaptive.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). Deloitte’s Global Human Capital Trends (2020) study shows that high-performing organizations in the digital age treat evaluation as part of an agile cycle, where KPIs are reviewed quarterly and insights are fed back into training, process design, and policy adjustments. This dynamic cycle prevents CDR from being an annual reporting exercise and instead anchors it in everyday work.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020).

Finally, evaluation is not only an internal exercise but also involves external communication and accountability.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). By publishing results in sustainability or CDR-specific reports, firms create transparency that strengthens stakeholder trust.4Lobschat, L. et al. Corporate digital responsibility. J. Bus. Res. 122, 875–888 (2021). This externalization of evaluation data also builds legitimacy in the eyes of regulators and the public, showing that CDR is not only a managerial priority but also a measurable performance domain.

Deliverables of this phase include a CDR KPI dashboard integrated into risk management systems, a calendar of review meetings and external audits, documented feedback protocols, and a reporting structure that feeds into corporate sustainability disclosures.11Deloitte. 2020 Global Human Capital Trends: The social enterprise at work—Paradox as a path forward (Deloitte Insights, 2020). These mechanisms ensure that CDR remains auditable, adaptable, and credible over time.6Dörr, S. Praxisleitfaden Corporate Digital Responsibility: Unternehmerische Verantwortung und Nachhaltigkeitsmanagement im Digitalzeitalter (Springer Gabler, 2020).